Details

With the spread of physical sensors and social sensors, we are living in a world of big sensor data. Though they generate heterogeneous data, they often provide complementary information. Combining these two types of sensors together enables sensor-enhanced social media analysis, which can lead to a better understanding of dynamically occurring situations.

We present two works that illustrate this general theme. In the first work, we utilize event related information detected from physical sensors to filter and then mine the geo-located social media data, to obtain high-level semantic information. Specifically, we apply a suite of visual concept detectors on video cameras to generate “camera tweets” and develop a novel multi-layer tweeting cameras framework. We fuse “camera tweets” and social media tweets via a “Concept Image” (Cmage).

Cmages are 2-D maps of concept signals, which serve as common data representation to facilitate event detection. We define a set of operators and analytic functions that can be applied on Cmages by the user not only to discover occurrences of events but also to analyze patterns of evolving situations. The feasibility and effectiveness of our framework is demonstrated with a large-scale dataset containing feeds from 150 CCTV cameras in New York City and Twitter data.

We also describe our preliminary “Tweeting Camera” prototype in which a smart camera can tweet semantic information through Twitter such that people can follow and get updated about events around the camera location. Our second work combines photography knowledge learned from social media with the camera sensors data to provide real-time photography assistance.

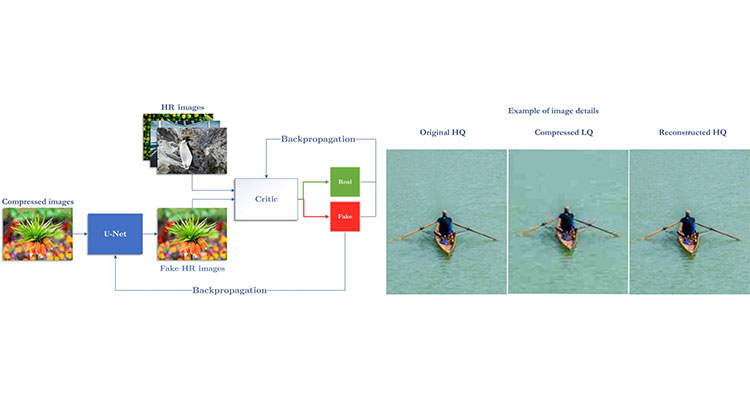

Professional photographers use their knowledge and exploit the current context to take high quality photographs. However, it is often challenging for an amateur user to do that. Social media and physical sensors provide us an opportunity to improve the photo-taking experience for such users. We have developed a photography model based on machine learning which is augmented with contextual information such as time, geo-location, environmental conditions and type of image, that influence the quality of photo capture.

The sensors available in a camera system are utilized to infer the current scene context. As scene composition and camera parameters play a vital role in the aesthetics of a captured image, our method addresses the problem of learning photographic composition and camera parameters. We also propose the idea of computing the photographic composition bases, eigenrules and baserules, to illustrate the proposed composition learning. Thus, the proposed system can be used to provide real-time feedback to the user regarding scene composition and camera parameters while the scene is being captured.