Insights

RIS project was co-funded by Regione Toscana (POR CReO FESR 2007 – 2013, BANDO UNICO R&S year 2012).

MICC was in charge for the design and development of a collaborative smart environment based on natural interaction, the multi-device semantic annotation and access to sensitive data, as well as the

design and development of a Computer Vision module for the automatic analysis of digital medical images and reports.

We developed a multi-modal multi-user system able to manage multiple inputs and outputs through three main interactive devices:

- VIDEOWALL (based on microsoft kinect®);

- TABLETOP (multi-touch table);

- TABLET.

The main functions of such system are:

- login process and secure access to the sensitive data;

- fruition and editing of digital multimedia medical images and reports;

- browsing and smart search on semantic registry;

- human and automatic annotation;

- interaction through multi-touch metaphor.

Finally, we developed the RIS system using the following software tools:

- C# Wpf

- HTML5

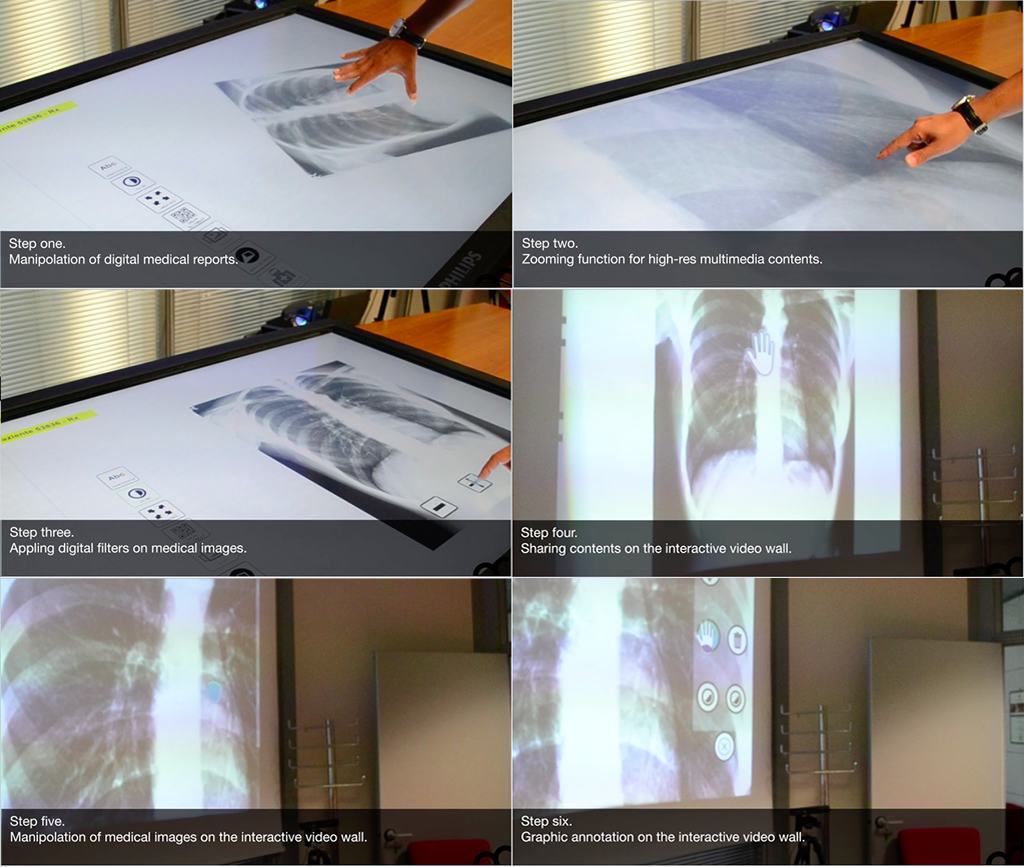

The system functions developed for the first prototype are discussed in the following steps:

- step 1: fruition and editing of digital medical images and reports. After identifying the patient, the system is able to display a list of available reports;

- step 2: changing the image size. The integration with XLimage services allows the system to display clinical images at high resolution and to make them interactive through the graphical interface on the interactive table, also allowing an optimization of the system’s resources;

- step 3: the system provides some tools for the image filtering in order to improve the readability of the report.

- step 4: the multimodality of the interactive environment provides the ability to use different interactive surfaces as means of input and output, such as the VIDEO WALL that is able to offer superior visibility in the case of a public or group of multiple passive users.

- step 5 and 6: also the VIDEO WALL provides users with functions such as zooming, dragging and image filtering, as well as a function for the graphic annotation of the image which facilitates the discussion phase;

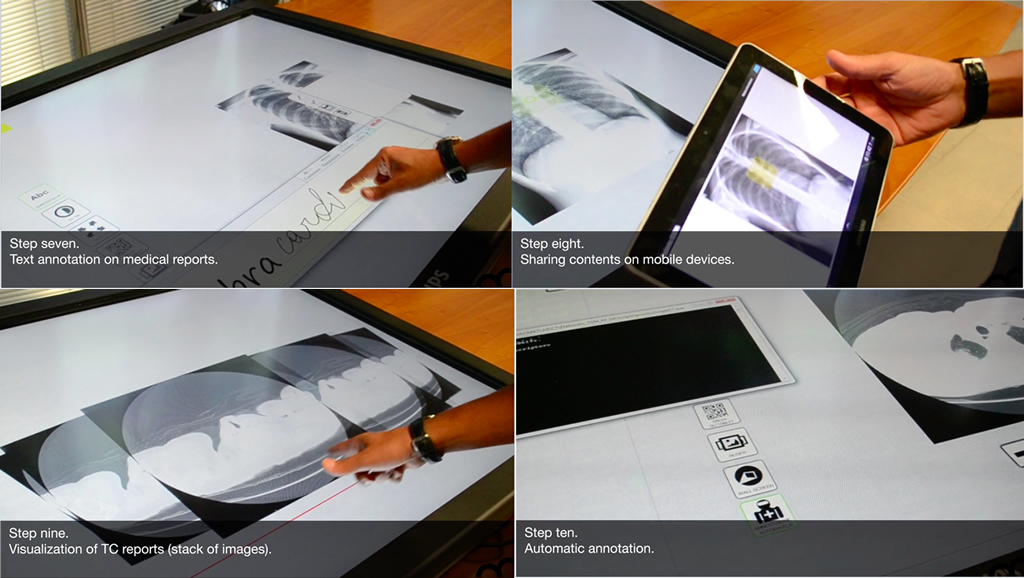

- step 7: textual annotations can be associated to a particular two-dimensional coordinate (in the case of medical reports as RX) or even to three-dimensional ones (in the case of tomographic images). The system provides a smart tool for the composition of text annotation through natural writing, as well as a virtual keyboard;

- step 8: the multimodality is also expressed providing the use of mobile devices (such as a tablet) for private fruition and annotation. The functions activated on the tablet can affect all the other interactive surfaces (TABLETOP and VIDEO WALL);

- step 9: the system provides also the functions for the fruition of a stack of images, as in the case of a CT image;

- step 10: the CV module for the automatic annotation is able to detect pulmonary nodules also of minimum size (difficult to be detected by human eyes). The annotation is available directly on the corresponding report for the validation process by the medical staff.

This first prototype was evaluated more than positively by the official project revisor in July 2015.

Future work will take place in the next year for the evaluation of the system by specialized users such as doctors, orthopedic, radiologists and so on. The aim is to produce a comprehensive documentation on the usability, accessibility, effectiveness and efficiency of the RIS system.