Insights

The development of accurate and unconstrained face recognition algorithms is a long term and challenging goal of the computer vision community. The term “unconstrained” implies a system can perform successful identifications regardless of face image capture presentation, environment and subject conditions. This project aims at improving the performance of unconstrained face recognition systems in real world scenarios.

MICC tasks in the GLAIVE/JANUS project are: Face Recognition and Face Tracking.

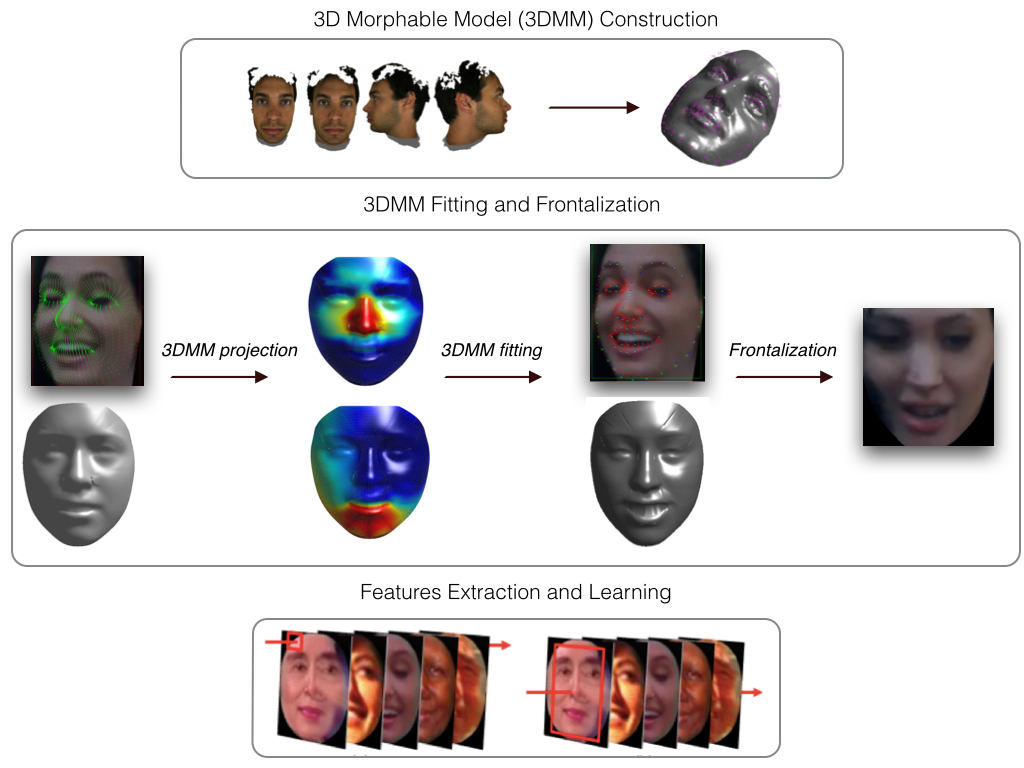

As regards the face recognition, our idea is to exploit both 2D and 3D face information to improve face recognition in the wild: we use the 3D information provided by some 3D face model in order to try to overcome all the well known issues arising when dealing with a “in the wild” 2D face recognition/verification problem, namely: pose, illumination and expression variations.

Practically, we aim at deforming and fitting the 3D face model to a 2D face image so as to represent all the face images in the same canonical form ( frontal ). This should ideally lead to a representation which is somewhat invariant to the above-mentioned issues.

The tracking problem is instead slightly different from the recognition one. In general the goal is to detect and follow a target object through a video sequence. In this case our specific object is a face; the main challenge is that a face can show both rigid and non-rigid movements, which lead to drastic changes in appearance.

For this problem we are developing a solution where the idea is to incrementally learn two trackers, one for the object (the face) and one for its context, to allow long term tracking of a single object. Separating the object itself and its contexts helps to get a more trenchant representation.