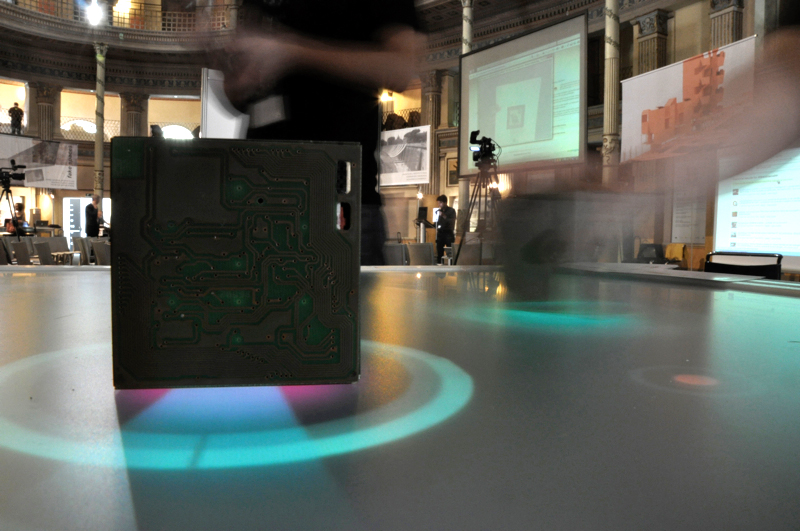

TANGerINE Grape is a collaborative knowledge sharing system that can be used through natural and tangible interfaces. The final goal is to enable users to enrich their knowledge through the attainment of information both from digital libraries and from the knowledge shared by other users involved in the same interaction session.

TANGerINE Grape

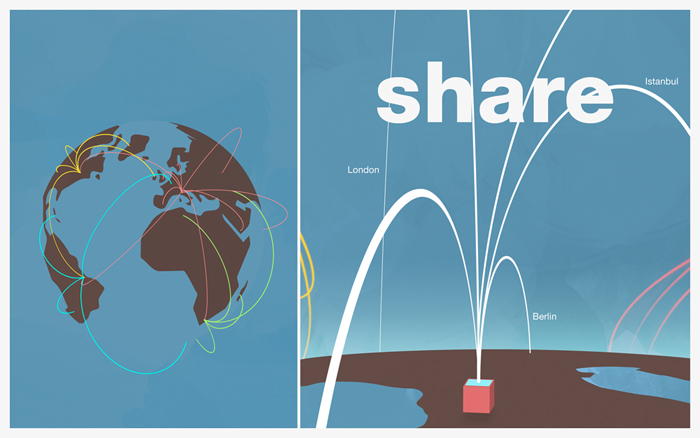

TANGerINE Grape is a collaborative tangible multi-user interface that allows users to perform semantic based content retrieval. Multimedia contents are organized through knowledgebase management structures (i.e. ontologies) and the interface allows a multi-user interaction with them through different input devices both in a co-located and remote environment.

TANGerINE Grape enables users to enrich their knowledge through the attainment of information both from an informative automatic system and from the knowledge shared by the other users involved: compared to a web-based interface, our system enables a collaborative face-to-face interaction together with the standard remote collaboration. Users, in fact, are allowed to interact with the system through different kind of input devices both in co-located or remote situation. In this way users enrich their knowledge even through the comparison with the other users involved in the same interaction session: they can share choices, results and comments. Face-to-face collaboration has also a ‘social’ value: co-located people involved in similar tasks improve their reciprocal personal/professional knowledge in terms of skills, culture, nature, interests and so on.

As use case we initially exploited the VIDI-Video project and then, to provide a faster response time and more advanced search possibilities, the IM3I project enhancing access to video contents by using its semantic search engine.

This project has been an important case study for the application of natural and tangible interaction research to the access to video content organized in semantic-based structures.